As I wrote last month, a big part of why I don’t buy the argument for age restrictions on social media is that critics haven’t done a good job defining what specific harms they are trying to stop with their invasive regulation. Two thinkers I greatly respect have unpacked the term “social media” in ways that could have major benefits for both the child safety debate, and the case for democratic representation online.

Cornell University professor danah boyd is an Internet legend. For example, she helped coin and popularize the idea of context collapse — when something you intended for one of your communities suddenly appears to a totally different group of people (or to the whole world). [I was also lucky to see boyd speak on a panel discussion when I was still at Harvard.]

boyd recently argued that the sharing aspect of “social media” has fallen by the wayside in favor of passive media consumption, with only a thin facade of simulated relationships:

…we now live in a world of parasocial media. Parasocial relationships are one-sided connections, where individuals keep tabs on the lives and movements of people – like celebrities – who do not know us and feel no pressure to reciprocate. In a parasocial world, people dedicate their attention and emotions to tracking the dramas of individuals who exist at a distance. Parasocial relationships can be emotionally intense, but they do not produce the kinds of social fabric that anchor us when we are struggling…

Social media stopped being primarily about connecting socially a long time ago. People still use a range of technologies to connect to friends and build relationships, but we don’t call those technologies – like texting and group chats – “social media.”

Ben Werdmuller, Senior Director of Technology at ProPublica, is another Internet veteran, with deep roots in the open Web. He’s built and invested in multiple open platforms himself and is an active participant and writer on the modern open social movement (Fediverse, Atmosphere, and so forth).

In a talk last October, Werdmuller separated social media into two different things:

Social media is the town square. We've heard a lot about the town square online over the last few years: a global commons where we can all learn from each other and build audiences at scale. Social networking, on the other hand, is a way to support communities of any kind using technology. These two things can interact and intersect, but they are not the same.

For what it's worth, because of its emphasis on scale, I think social media is more predisposed to centralization. And because of its emphasis on relationships and communities, social networking is more predisposed to decentralization.

Jakob Nielsen: participation inequality

Both boyd and Werdmuller talk about a split between the scaled mass media experience, and the decentralized world of direct human connection. This may be inherent in the nature of large communities. Way back in 2006, usability expert Jakob Nielsen introduced the notion of participation inequality to the online world:

All large-scale, multi-user communities and online social networks that rely on users to contribute content or build services share one property: most users don't participate very much. Often, they simply lurk in the background.

In contrast, a tiny minority of users usually accounts for a disproportionately large amount of the content and other system activity…

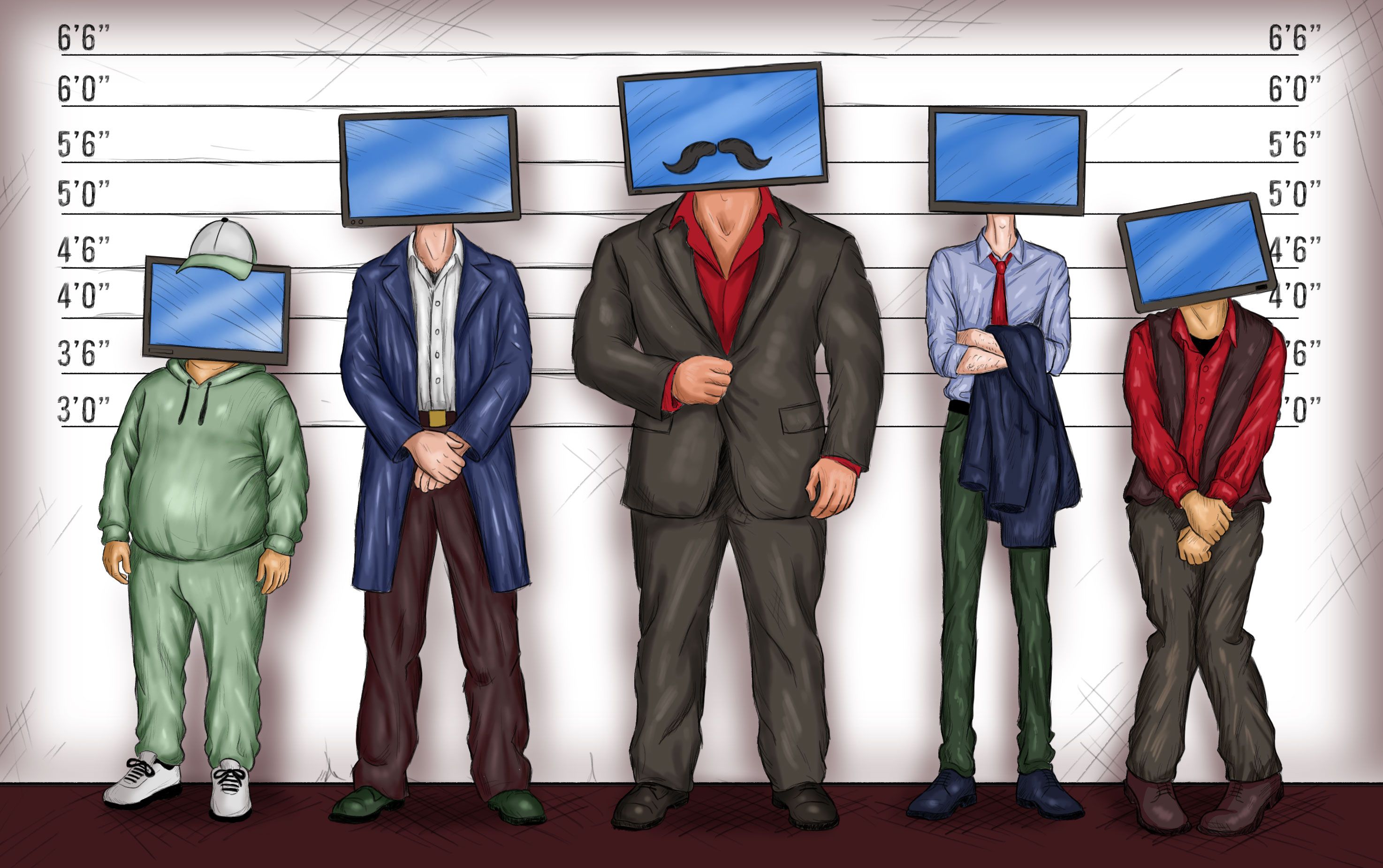

User participation often more or less follows a 90–9–1 rule:

90% of users are lurkers (i.e., read or observe, but don't contribute).

9% of users contribute from time to time, but other priorities dominate their time.

1% of users participate a lot and account for most contributions: it can seem as if they don't have lives because they often post just minutes after whatever event they're commenting on occurs.

Subsequent research has tended to support Nielsen’s argument, with two key exceptions: people appear to like and share content at a higher frequency than they create new content; and some suggestions that members of smaller or specialist communities participate at higher rates.

If 90% or more of all social media users are just passively consuming feeds, maybe we should stop treating these platforms as an exception. How would the discussion change if we looked at social media as just a variation of mass media that automates the human editing process with open submissions, like buttons, and recommendation algorithms?

If social media is just media, we could consider harms to kids and teens as a question of content, not anything inherent in the medium itself. Are the social media companies doing a good enough job identifying and clearly labelling potentially harmful content, so parents can decide whether to let their children see it? Is a recommendation algorithm liable for pushing something inappropriate into a kid’s feed or encouraging them to keep consuming endlessly?

This could put us into the murky territory of age ratings, which have been around (and controversial) for decades for film, music, and video games. There is also real concern that more liability for content harms would cause these platforms to just pull down vast swathes of important content to avoid even a hint of risk. We already see a version of this problem in overbroad filtering systems in schools.

Even if we want to avoid the moderation / ratings question, a media frame could be useful by lettings us talk about whether there should be more rules around jumping from lurker to creator. I’ve written before about how open, anonymous signups lead to a lot of harm, like Meta making 10% of its ad revenue from scams and fraud.

This would clarify another part of the debate over kids on social media. Age verification to become a creator might be less objectionable than for content consumption. Features like live streaming on TikTok already require users to submit ID and prove they are 18 or older.

Communities are not content

Werdmuller’s “social networking” distinction also helps explain why big-company policy enforcement feels so wrong when applied to smaller online communities. Our social networks are now being governed by companies whose primary concern is growing their incredibly lucrative mass-media businesses.

It’s increasingly clear that the largest social media platforms have stopped caring about the social-networking part of their business, except to the degree it gets people back into the feed. boyd cites a 2022 paper that found big platforms have almost totally dropped the word “sharing” from their public communications. And Facebook has blatantly shifted their focus away from friends and family toward creator content.

We should not quietly accept the atrophy of social networking features. The ability to make authentic human connections online is one of the best things the Internet has brought us.

Since the biggest platforms only got to their mass-media business through social networking, maybe they should not be able to walk away from it so easily. Imagine requiring the biggest media-centric platforms to fund free or low-cost social networking tools in good faith, not as a sideshow. This could be a path to getting the biggest platforms to pay for the external harms they’ve imposed on society.

Protecting social networking could also improve the debate over children online. We would not need to demonize the entire concept of connecting online because of the risk of the consumption algorithm trying to divert them into doomscrolling. Instead, we would be able to discuss sensible ways to help children build healthy and safe social networks at every age.

And, of course, we should require social networking tools to give the communities they serve a voice in their rules, due process before ejecting someone, and the freedom to Exit.

If we take boyd and Werdmuller’s advice to stop pretending that modern global media businesses like Meta, TikTok, and YouTube are just old-school pipes for social connection, we can start to govern their behavior and harms appropriately, and take back control of the spaces where we actually connect with each other in authentic ways.

Ideas? Feedback? Criticism? I want to hear it, because I am sure that I am going to get a lot of things wrong along the way. I will share what I learn with the community as we go. Reach out any time at [email protected].